ACM A.M. TURING AWARD HONORS TWO RESEARCHERS WHO LED THE DEVELOPMENT OF CORNERSTONE AI TECHNOLOGY

Andrew Barto and Richard Sutton Recognized as Pioneers of Reinforcement Learning

ACM, the Association for Computing Machinery, today named Andrew Barto and Richard Sutton as the recipients of the 2024 ACM A.M. Turing Award for developing the conceptual and algorithmic foundations of reinforcement learning. In a series of papers beginning in the 1980s, Barto and Sutton introduced the main ideas, constructed the mathematical foundations, and developed important algorithms for reinforcement learning—one of the most important approaches for creating intelligent systems.

Barto is Professor Emeritus of Information and Computer Sciences at the University of Massachusetts, Amherst. Sutton is a Professor of Computer Science at the University of Alberta and a Research Scientist at Keen Technologies.

The ACM A.M. Turing Award, often referred to as the “Nobel Prize in Computing,” carries a $1 million prize with financial support provided by Google, Inc. The award is named for Alan M. Turing, the British mathematician who articulated the mathematical foundations of computing.

What is Reinforcement Learning?

The field of artificial intelligence (AI) is generally concerned with constructing agents—that is, entities that perceive and act. More intelligent agents are those that choose better courses of action. Therefore, the notion that some courses of action are better than others is central to AI. Reward—a term borrowed from psychology and neuroscience—denotes a signal provided to an agent related to the quality of its behavior. Reinforcement learning (RL) is the process of learning to behave more successfully given this signal.

The idea of learning from reward has been familiar to animal trainers for thousands of years. Later, Alan Turing’s 1950 paper “Computing Machinery and Intelligence,” addressed the question “Can machines think?” and proposed an approach to machine learning based on rewards and punishments.

While Turing reported having conducted some initial experiments with this approach and Arthur Samuel developed a checker-playing program in the late 1950s that learned from self-play, little further progress occurred in this vein of AI in the following decades. In the early 1980s, motivated by observations from psychology, Barto and his PhD student Sutton began to formulate reinforcement learning as a general problem framework.

They drew on the mathematical foundation provided by Markov decision processes (MDPs), wherein an agent makes decisions in a stochastic (randomly determined) environment, receiving a reward signal after each transition and aiming to maximize its long-term cumulative reward. Whereas standard MDP theory assumes that everything about the MDP is known to the agent, the RL framework allows for the environment and the rewards to be unknown. The minimal information requirements of RL, combined with the generality of the MDP framework, allows RL algorithms to be applied to a vast range of problems, as explained further below.

Barto and Sutton, jointly and with others, developed many of the basic algorithmic approaches for RL. These include their foremost contribution, temporal difference learning, which made an important advance in solving reward prediction problems, as well as policy-gradient methods and the use of neural networks as a tool to represent learned functions. They also proposed agent designs that combined learning and planning, demonstrating the value of acquiring knowledge of the environment as a basis for planning.

Perhaps equally influential was their textbook, Reinforcement Learning: An Introduction (1998), which is still the standard reference in the field and has been cited over 75,000 times. It allowed thousands of researchers to understand and contribute to this emerging field and continues to inspire much significant research activity in computer science today.

Although Barto and Sutton’s algorithms were developed decades ago, major advances in the practical applications of RL came about in the past fifteen years by merging RL with deep learning algorithms (pioneered by 2018 Turing Awardees Bengio, Hinton, and LeCun). This led to the technique of deep reinforcement learning.

The most prominent example of RL was the victory by the AlphaGo computer program over the best human Go players in 2016 and 2017. Another major achievement recently has been the development of the chatbot ChatGPT. ChatGPT is a large language model (LLM) trained in two phases, the second of which employs a technique called reinforcement learning from human feedback (RLHF), to capture human expectations.

RL has achieved success in many other areas as well. A high-profile research example is robot motor skill learning in the in-hand robotic manipulation and solution of a physical (Rubik’s Cube), which showed it possible to do all the reinforcement learning in simulation yet ultimately be successful in the significantly different real world.

Other areas include network congestion control, chip design, internet advertising, optimization, global supply chain optimization, improving the behavior and reasoning capabilities of chatbots, and even improving algorithms for one of the oldest problems in computer science, matrix multiplication.

Finally, a technology that was partly inspired by neuroscience has returned the favor. Recent research, including work by Barto, has shown that specific RL algorithms developed in AI provide the best explanations for a wide range of findings concerning the dopamine system in the human brain.

“Barto and Sutton’s work demonstrates the immense potential of applying a multidisciplinary approach to longstanding challenges in our field,” explains ACM President Yannis Ioannidis. “Research areas ranging from cognitive science and psychology to neuroscience inspired the development of reinforcement learning, which has laid the foundations for some of the most important advances in AI and has given us greater insight into how the brain works. Barto and Sutton’s work is not a stepping stone that we have now moved on from. Reinforcement learning continues to grow and offers great potential for further advances in computing and many other disciplines. It is fitting that we are honoring them with the most prestigious award in our field.”

“In a 1947 lecture, Alan Turing stated ‘What we want is a machine that can learn from experience,’” noted Jeff Dean, Chief Scientist of Google. “Reinforcement learning, as pioneered by Barto and Sutton, directly answers Turing’s challenge. Their work has been a lynchpin of progress in AI over the last several decades. The tools they developed remain a central pillar of the AI boom and have rendered major advances, attracted legions of young researchers, and driven billions of dollars in investments. RL’s impact will continue well into the future. Google is proud to sponsor the ACM A.M. Turing Award and honor the individuals who have shaped the technologies that improve our lives.”

Biographical Background

Andrew G. Barto

Andrew Barto is Professor Emeritus, Department of Information and Computer Sciences, University of Massachusetts, Amherst. He began his career at UMass Amherst as a postdoctoral Research Associate in 1977, and has subsequently held various positions including Associate Professor, Professor, and Department Chair. Barto received a BS degree in Mathematics (with distinction) from the University of Michigan, where he also earned his MS and PhD degrees in Computer and Communication Sciences.

Barto’s honors include the UMass Neurosciences Lifetime Achievement Award, the IJCAI Award for Research Excellence, and the IEEE Neural Network Society Pioneer Award. He is a Fellow of the Institute of Electrical and Electronics Engineers (IEEE), and a Fellow of the American Association for the Advancement of Science (AAAS).

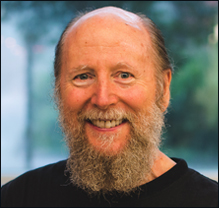

Richard S. Sutton

Richard Sutton is a Professor in Computing Science at the University of Alberta, a Research Scientist at Keen Technologies (an artificial general intelligence company based in Dallas, Texas) and Chief Scientific Advisor of the Alberta Machine Intelligence Institute (Amii). Sutton was a Distinguished Research Scientist at Deep Mind from 2017 to 2023. Prior to joining the University of Alberta, he served as a Principal Technical Staff Member in the Artificial Intelligence Department at the AT&T Shannon Laboratory in Florham Park, New Jersey, from 1998 to 2002. Sutton’s collaborations with Andrew Barto began in 1978 at the University of Massachusetts at Amherst, where Barto was Sutton’s PhD and postdoctoral advisor. Sutton received his BA in Psychology from Stanford University and earned his MS and PhD degrees in Computer and Information Science from the University of Massachusetts at Amherst.

Sutton’s honors include receiving the IJCAI Research Excellence Award, a Lifetime Achievement Award from the Canadian Artificial Intelligence Association, and an Outstanding Achievement in Research Award from the University of Massachusetts at Amherst. Sutton is a Fellow of the Royal Society of London, a Fellow of the Association for the Advancement of Artificial Intelligence, and a Fellow of the Royal Society of Canada.

The A.M. Turing Award, the ACM's most prestigious technical award, is given for major contributions of lasting importance to computing.

This site celebrates all the winners since the award's creation in 1966. It contains biographical information, a description of their accomplishments, straightforward explanations of their fields of specialization, and text or video of their A. M. Turing Award Lecture.

The A.M. Turing Award, sometimes referred to as the "Nobel Prize in Computing," was named in honor of Alan Mathison Turing (1912–1954), a British mathematician and computer scientist. He made fundamental advances in computer architecture, algorithms, formalization of computing, and artificial intelligence. Turing was also instrumental in British code-breaking work during World War II.

THE A.M. TURING AWARD

THE A.M. TURING AWARD